Why Your AI Tools Feel Like Managing an Intern

Dave Graham

Principal Consultant

February 17, 2026

Most companies using AI are having the same experience: it's helpful, sort of, but requires constant supervision. You ask ChatGPT to draft an email, then spend ten minutes fixing it. You automate a report, but the output needs so much hand-holding you wonder if you should've done it yourself.

The common assumption: the AI isn't good enough yet. Wait for GPT-6, or Claude 5, or whatever's next.

What we keep seeing instead: the model is rarely the bottleneck. It's the readiness of your people and the maturity of your processes.

The Two-Sided Problem

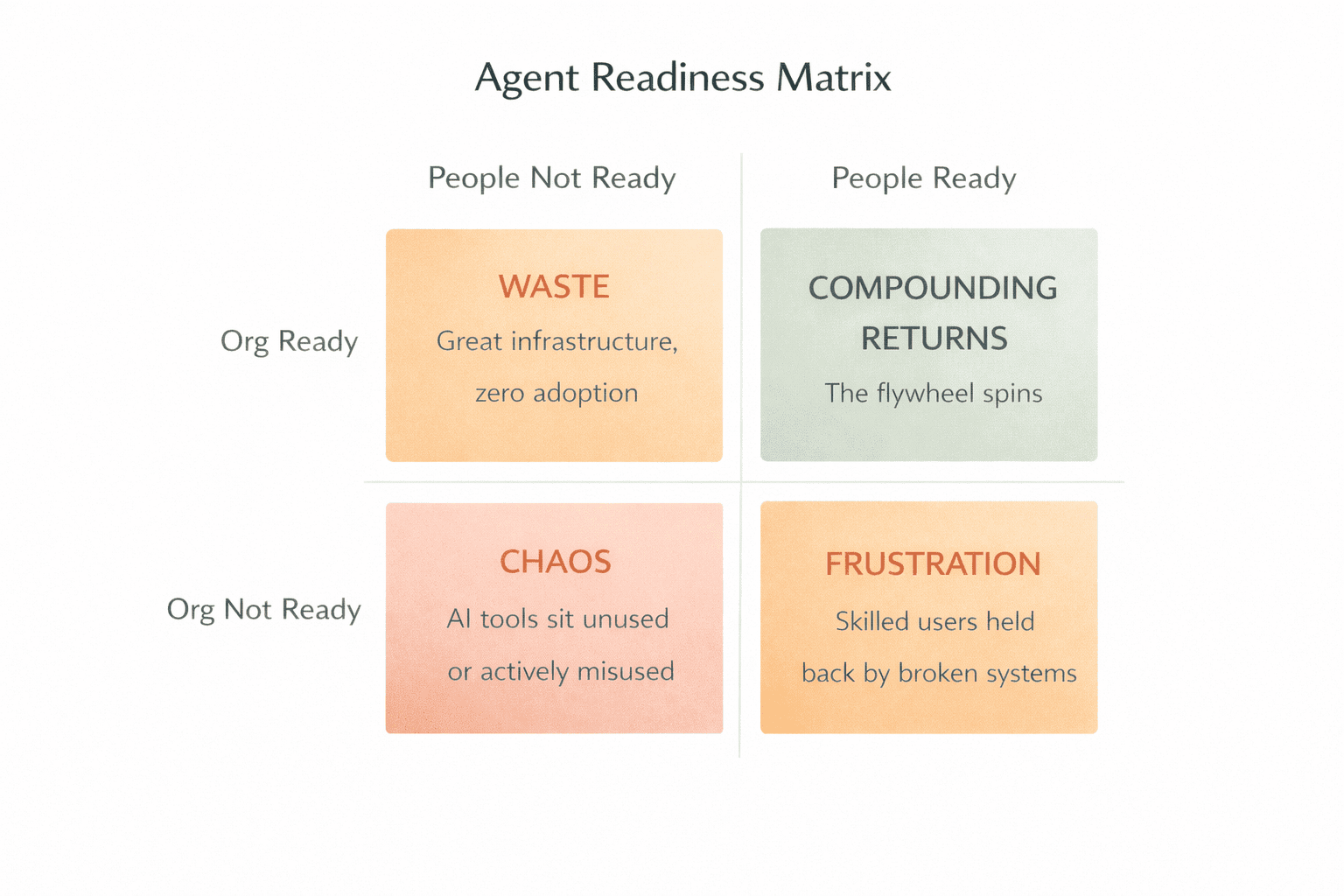

Agent success requires both ready people and a ready organization. Most companies only think about one side.

The gap we see over and over: L1 people wanting L4 results, inside L2 organizations, blaming the AI.

People Readiness: The Four Levels

Watch how people actually use AI, and a pattern emerges:

Level 1: Search replacement. You use ChatGPT like a smarter Google. Summarize this. Explain that. Draft something. Useful, but barely scratching the surface. This is where most professionals are.

Level 2: Custom prompts with training wheels. You've built a few GPTs or saved prompts for recurring tasks. But you're still babysitting, confirming every step, fixing every output. This is the "managing an intern" stage.

Level 3: Autonomous task execution. The AI handles defined jobs without asking permission. Research, analysis, report generation. It runs, you review the output. Human-in-the-loop, but the loop is at the end, not every step.

Level 4: AI as operating system. You work primarily through AI interfaces. Claude Code for development, agents for research, automated workflows for operations. You allocate tasks to AI the way a manager delegates to a team.

Most companies have L1 people trying to jump to Level 4. They blame the model. The model isn't the problem.

Organization Readiness: The Infrastructure Side

Even skilled AI users fail in organizations that aren't set up for agents. Your org infrastructure matters just as much as your people.

The key questions:

- Can an agent find what it needs? Or does critical knowledge live in people's heads?

- Are workflows explicit enough to follow? Or does the agent not know when it's done?

- Can agents access your systems? Or can they only read, not act?

- Is your data clean and current? Or will agents hallucinate from stale context?

- Can agents verify their own work? Or do they need approval on everything?

When processes live in Slack threads and tribal knowledge, AI has nothing to learn from. It guesses. It hallucinates. It asks you to confirm every step because it has no ground truth to reference.

The Documentation Test

One question predicts whether a task can be automated:

"Could you write instructions detailed enough for a stranger to do this job?"

If yes, an AI can probably do it. If the answer is "well, it depends" or "you kind of have to know..." then that's a human task, at least for now.

The companies that get the most from AI share one trait: their processes are written down. Not because they planned for AI, but because documented businesses run better with or without automation.

A 10-Minute Exercise

List your ten most repetitive tasks this week. Mark which ones you'd confidently hand to a smart intern on day one, using nothing but written instructions.

Those marked tasks? That's your automation surface area.

For each one, write the delegation instructions as if training that intern:

- What inputs does this task need?

- What does "done" look like?

- What should they do if something's unclear?

- How would you check their work?

You just wrote your first agent prompts.

The Compound Effect

When you document a process to automate it, you've also:

- Created training material for new hires

- Built an audit trail for quality control

- Identified inefficiencies you couldn't see before

- Made the process improvable

The documentation compounds. Better docs mean smarter AI. Smarter AI handles more work. That frees time to document more processes. The flywheel spins.

Companies spending six figures on AI tools while their processes live in Slack threads and tribal knowledge are buying sports cars to drive in parking lots. The tools aren't the constraint. The environment is.

Before you upgrade your AI subscription, ask: Is your business ready for the AI you already have?

We run a half-day Agent Readiness Assessment that scores both sides of the equation, your people and your organization. You walk away with a clear picture of where you're blocked, a prioritized roadmap, and quick wins you can implement that week.