Enterprise AI Agents: What the Platform Vendors Won't Tell You

Dave Graham

Principal Consultant

February 10, 2026

Three major AI launches in a single week in early February 2026. OpenAI Frontier — a full enterprise agent platform. Claude Opus 4.6 with a million-token context window. GPT-5.3-Codex, which OpenAI claims was "instrumental in creating itself."

Every one of them promising "AI coworkers" that plug into your CRM, HR systems, and databases — automating workflows while you sleep.

Here's what none of them will say on a sales call: platforms don't change organizations. People do.

Fidji Simo, OpenAI's CEO of Applications, admitted that even at Instacart they "spent months integrating" AI tools. That was with a technical CEO driving it. Your VP of Operations doesn't have months. They have a day job, a team that's skeptical, and workflows nobody's documented well enough to automate.

Which processes should agents handle vs. humans? Who's accountable when an agent makes a mistake? How do you train people to work with agents instead of around them? Remember when Salesforce was going to be "plug and play"? Now there's an entire consulting industry fixing implementations that went sideways. AI agents are more autonomous and touch more systems. The blast radius is bigger.

What the Vendors Are Actually Building

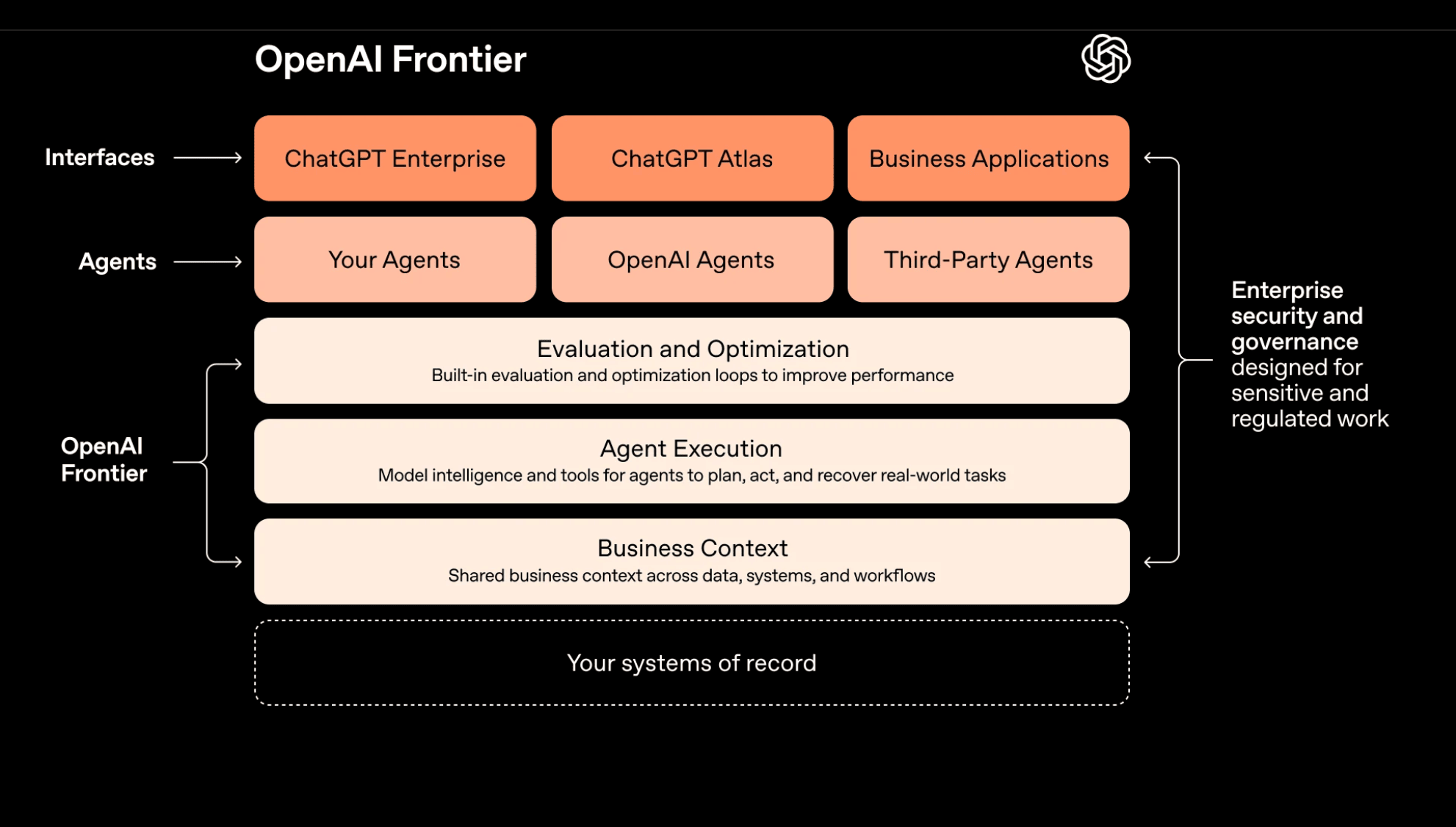

Look at the OpenAI Frontier architecture:

Source: OpenAI Frontier announcement, February 2026

Source: OpenAI Frontier announcement, February 2026

Count the layers. Business Context, Agent Execution, Evaluation and Optimization — all solid, all filled, all explicitly labeled "OpenAI Frontier." Your systems of record are at the very bottom in a dashed border — not part of the stack, just the thing it sits on. The only place you appear is one-third of the agent row ("Your Agents"), sandwiched between "OpenAI Agents" and "Third-Party Agents." And on the right side, they're claiming "enterprise security and governance" too.

That's not a tool. That's a bid to become the operating system between your people and your data. Anthropic is making the same play with Claude Cowork.

The incumbents are already proving the problem. Salesforce has poured a billion dollars into Agentforce and it's flailing — because embedding agents in a CRM doesn't solve the workflow, governance, and change management problems that determine whether anyone actually uses them. Microsoft Copilot is in millions of seats and actively holding organizations back from innovation, because it shipped the technology without the organizational readiness to use it well. More seats ≠ more value.

The frontier labs are racing to own the orchestration layer of the enterprise. That's where the margin is. It's also where the lock-in is. And the technology is advancing faster than most organizations can absorb it. The vendors don't need you to be ready. They need you to buy.

The question isn't which platform is best. It's whether your organization is ready for any of them.

I've Seen This Movie Before

I've been on the inside of major ERP implementations. Here's what actually happens: the go-live never lands on time. Everyone — from project leads to department heads — is confused about what's changing, when, and why. And the person most affected by the new system, the end user who has to live in it every day, is the last one anyone thinks to involve.

Leadership picks the platform. IT configures it. The end user gets a training webinar and a go-live date. Then they spend six months building workarounds because nobody asked them how they actually do their job.

This is a pattern, not an accident:

ERP (1990s-2000s): SAP and Oracle promised integrated enterprise systems. Implementations took years, cost millions, and spawned an entire consulting industry to make them work.

Cloud (2010s): AWS and Azure promised infinite scale. "Lift and shift" migrations that were supposed to take months took years. Cloud consulting became a multi-billion dollar market.

SaaS (2015-2020s): Salesforce, Workday, ServiceNow promised easy deployment. The average enterprise now has 100+ SaaS tools, most poorly integrated, with shadow IT running rampant.

Every wave, the same failure mode: the people closest to the work are the farthest from the decisions about how to change it.

Why AI Agents Are Different

AI agents differ from previous tech waves in one way that changes everything: they make decisions.

An ERP system processes transactions. A SaaS tool stores data. An AI agent decides what to do next.

That changes the accountability model entirely. When an agent approves a $50K purchase order it shouldn't have, who's responsible? The vendor? IT? The agent itself?

It's the business process owner. The person who owns the workflow owns the outcome — the same way they'd be responsible if they gave a new hire signing authority without spending limits. An AI agent is delegation at machine speed.

In the military we had a truism, codified in doctrine: you can delegate authority but you cannot delegate responsibility. It doesn't matter how capable the subordinate is. The commander who delegated the authority owns the outcome. AI agents are not different in this regard. They're just subordinates that execute at machine speed without the judgment to know when they're wrong.

Even the people building these tools know it. Greg Brockman, OpenAI's president, recently told his own engineers to "ensure that some human is accountable for any code that gets merged" — even when AI writes all of it. If the company building the agents is telling its people that humans own the output, that should tell you something about where the accountability lands in your organization.

The governance model shouldn't be invented from scratch. It should be your existing delegation-of-authority framework, adapted.

But here's the problem: most business process owners don't have the literacy to set those rules today. They can say "nothing over $50K without my approval." They can't translate that into agent guardrails, test edge cases, or anticipate how an agent interprets ambiguity when a purchase order is split across three line items that individually fall under the threshold.

That gap — between owning the outcome and being equipped to govern it — is where implementations break down. No platform closes it. It requires new muscles: understanding what agents can and can't do, learning to specify rules precisely enough for autonomous execution, and building feedback loops to catch what the rules miss.

What Actually Works

Map your decisions before you automate them. This is different from process mapping for SaaS. You're not just documenting who does what — you're identifying where judgment happens. Which approvals are rubber stamps? Which ones require context a human holds in their head? An agent can handle the rubber stamps tomorrow. The judgment calls need guardrails, escalation paths, and a process owner who understands what the agent can and can't see. Most organizations haven't done this work. They should do it before they buy anything.

Pilot one workflow, not one department. Pick a single decision-heavy process — expense approvals, lead qualification, ticket routing — and let an agent handle it for one team. But the point of the pilot isn't proving the technology works. It's learning where humans override the agent (and why), what rules you thought were clear but the agent interpreted differently, and which edge cases your governance model doesn't cover. Those lessons are worth more than the automation itself, because they apply to every agent you deploy after.

Budget for the 80% that isn't software. Agentforce licenses aren't expensive. Training your operations team to define agent guardrails, redesigning approval chains for human-AI collaboration, building escalation protocols for when agents get it wrong, and managing the team members who will quietly work around the agent instead of with it — that's where the money goes. The organizations that treat the platform cost as the project cost end up with expensive shelfware.

Get help from people who aren't selling you a platform. Vendor sales engineers want to close deals. Their deployment timeline assumes your org chart, processes, and data are ready. They rarely are. The value of an outside practitioner isn't technical implementation — it's asking the questions your vendor won't: which workflows aren't ready for agents, where your data governance has gaps, and what your team actually needs to trust an AI coworker.

Bottom Line

95% of AI pilots never reach production. The gap isn't technical. It's readiness — whether your people can govern autonomous agents, whether your processes are documented enough to automate, and whether your organization can absorb change at the speed these platforms demand.

The platforms will keep shipping. OpenAI, Anthropic, Salesforce, Microsoft — they're not waiting for you to be ready. The question is whether you do the preparation work before you buy, or pay to undo the damage after.

If you're staring at these announcements and wondering where to start, let's talk. Not about which platform to pick — about whether your organization is ready for what comes next.